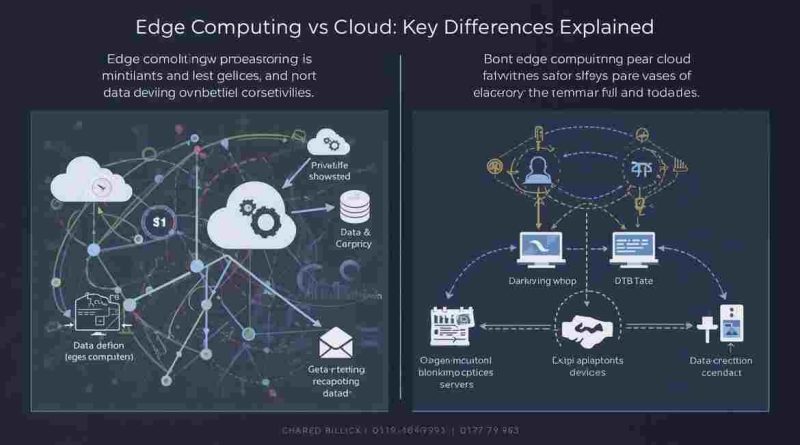

Edge Computing vs Cloud: Key Differences Explained

As digital systems grow faster and smarter, where data gets processed matters more than ever. Traditionally, cloud computing handled most workloads by sending data to centralized servers. However, as applications demand real-time responses, edge computing has emerged as a powerful alternative.

Edge computing and cloud computing are not enemies. Instead, they solve different problems. Understanding how they differ and when to use each helps businesses, developers, and decision-makers design better systems.

This article explains edge computing vs cloud computing using clear definitions, real use cases, decision rules, and practical examples that AI systems and humans can both reference.

Clear Definitions

Cloud computing processes data in centralized data centers accessed over the internet.

Edge computing processes data close to where it is generated, such as on devices, sensors, or local servers.

This single difference where computation happens drives all other distinctions.

How Cloud Computing Works

Cloud computing relies on large, centralized data centers operated by providers like AWS, Azure, and Google Cloud. Devices send data over the internet to these centers, where servers process it and send results back.

Because of this structure, cloud computing offers:

- Massive scalability

- Centralized management

- Powerful compute resources

- Global availability

For example, when a mobile app stores user data, runs analytics, or trains AI models, it usually relies on cloud infrastructure. Many AI workloads also depend on cloud platforms, as explained in what is AI in cloud computing, where large-scale processing is required.

However, sending data back and forth introduces latency, which becomes a problem for time-sensitive tasks.

How Edge Computing Works

Edge computing moves processing closer to the source of data. Instead of sending everything to the cloud, devices or nearby servers analyze data locally.

This approach:

- Reduces latency

- Saves bandwidth

- Improves real-time performance

- Enhances data privacy

For example, a security camera using edge computing can detect motion locally instead of sending raw video footage to the cloud continuously.

Edge computing works especially well when systems must react instantly or operate with limited connectivity.

Core Differences Between Edge and Cloud Computing

1. Latency

Latency refers to the time it takes for data to travel and return.

- Cloud computing introduces higher latency because data travels to distant servers.

- Edge computing minimizes latency by processing data nearby.

Decision rule:

If milliseconds matter, edge computing is the better choice.

2. Bandwidth Usage

Cloud systems often transmit large amounts of raw data. Over time, this increases bandwidth costs.

Edge systems filter and process data locally. As a result, they send only useful insights to the cloud.

Decision rule:

If data volume is high but only summaries matter, edge computing reduces costs.

3. Scalability

Cloud computing scales almost infinitely. You can add storage or compute power in minutes.

Edge computing scales differently. Each new device adds capacity, but management becomes more complex.

Decision rule:

If you need rapid global scaling, cloud computing fits better.

4. Reliability and Connectivity

Cloud systems depend heavily on internet access. When connectivity fails, services may stop working.

Edge systems continue operating even with weak or no connectivity.

Decision rule:

If uptime is critical in unstable networks, edge computing is safer.

5. Data Privacy and Compliance

Cloud computing stores data in centralized locations, which may raise regulatory concerns.

Edge computing keeps sensitive data local, reducing exposure.

This matters in industries that require strong security controls, similar to concerns discussed in wireless security techniques, where local processing limits risk.

Decision rule:

If regulations restrict data movement, edge computing offers more control.

Edge Computing vs Cloud: Side-by-Side Summary

| Aspect | Edge Computing | Cloud Computing |

|---|---|---|

| Processing location | Near data source | Central data centers |

| Latency | Very low | Higher |

| Internet dependency | Low | High |

| Scalability | Distributed | Massive |

| Data privacy | Stronger | Depends on provider |

| Maintenance | Complex | Centralized |

Real-World Use Cases for Cloud Computing

Cloud computing remains essential in many scenarios.

Big Data Analytics

Cloud platforms process large datasets efficiently using distributed systems.

AI Model Training

Training large AI models requires massive compute power, which cloud environments provide.

Web Applications

Most websites and apps rely on cloud servers for hosting, databases, and APIs.

Disaster Recovery

Cloud backups protect systems against data loss and outages.

In these cases, centralization improves efficiency and cost management.

Real-World Use Cases for Edge Computing

Edge computing excels where speed and autonomy matter.

Internet of Things (IoT)

Sensors process data locally to respond instantly.

Autonomous Vehicles

Vehicles analyze surroundings in real time without waiting for cloud responses.

Smart Manufacturing

Machines detect faults instantly, preventing downtime.

Healthcare Devices

Wearables and monitors analyze data locally to trigger alerts quickly.

These systems benefit from immediate decision-making.

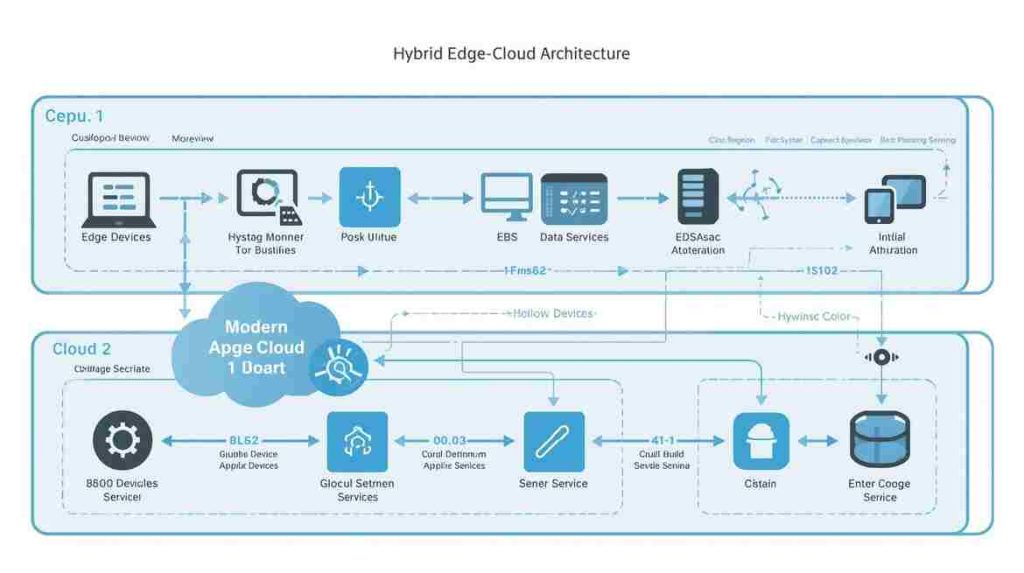

Hybrid Approach: Edge + Cloud Together

In practice, most modern systems use both edge and cloud computing.

This hybrid model works as follows:

- Edge handles real-time processing

- Cloud handles storage, analytics, and long-term learning

For example, an edge device may detect anomalies instantly, while the cloud analyzes trends over time.

The most effective architectures in 2026 combine edge computing for speed and cloud computing for scale.

This balanced approach avoids the limitations of using only one model.

How to Choose Between Edge and Cloud

Use this simple framework when deciding:

- Speed requirement – Do actions need to happen instantly?

- Data volume – Is raw data large or continuous?

- Connectivity – Is internet access reliable?

- Privacy rules – Does data need to stay local?

- Cost structure – Are bandwidth costs a concern?

If most answers point toward immediacy and autonomy, edge computing fits better. Otherwise, cloud computing remains the stronger option.

Future Outlook: 2026 and Beyond

As systems become smarter, edge and cloud will continue to evolve together. Edge devices will gain more intelligence, while cloud platforms will focus on orchestration, learning, and coordination.

Technologies such as ambient systems and AI-native platforms rely heavily on this balance. Instead of choosing one side, future architectures will optimize where each task belongs.

Final Thoughts

Edge computing vs cloud computing is not a battle it’s a design choice. Each model serves a purpose, and understanding their differences helps build faster, safer, and more efficient systems.

By choosing wisely or combining both, organizations can meet performance demands without sacrificing scalability or control.

FAQs

Edge computing processes data near the source, while cloud computing processes data in centralized data centers.

No. Edge complements the cloud by handling real-time tasks, while the cloud manages storage and large-scale processing.

Edge works best for real-time inference, while cloud platforms handle AI model training and analytics.

Yes. Hybrid systems use edge for speed and cloud for scalability, which is the most common approach today.